There’s been a shift in how digital products get built. Teams are using AI to generate pages, journeys, and even entire websites in a fraction of the time it used to take. From a delivery perspective, it’s impressive. Things get shipped faster, iterations are easier, and the barrier to building is lower than it’s ever been.

But there’s a catch that’s starting to show up more and more.

A lot of these products work technically. They load, they function, they pass checks. Yet when real people start using them, something feels off. Not broken, just not quite right. And that difference is starting to matter.

AI can build fast. Real experience is harder to get right.

What we’re seeing more often is this gap between something working, and something working well.

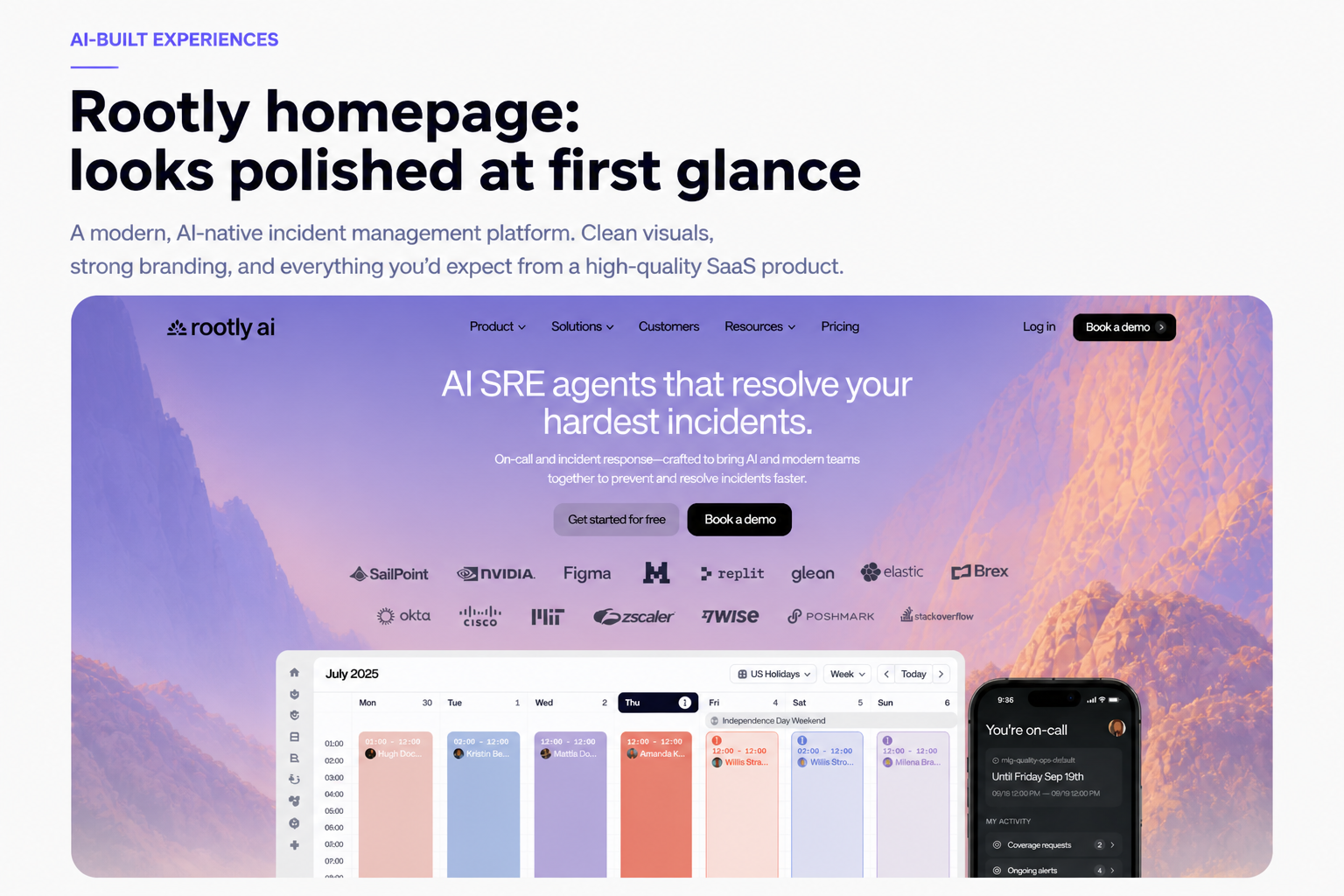

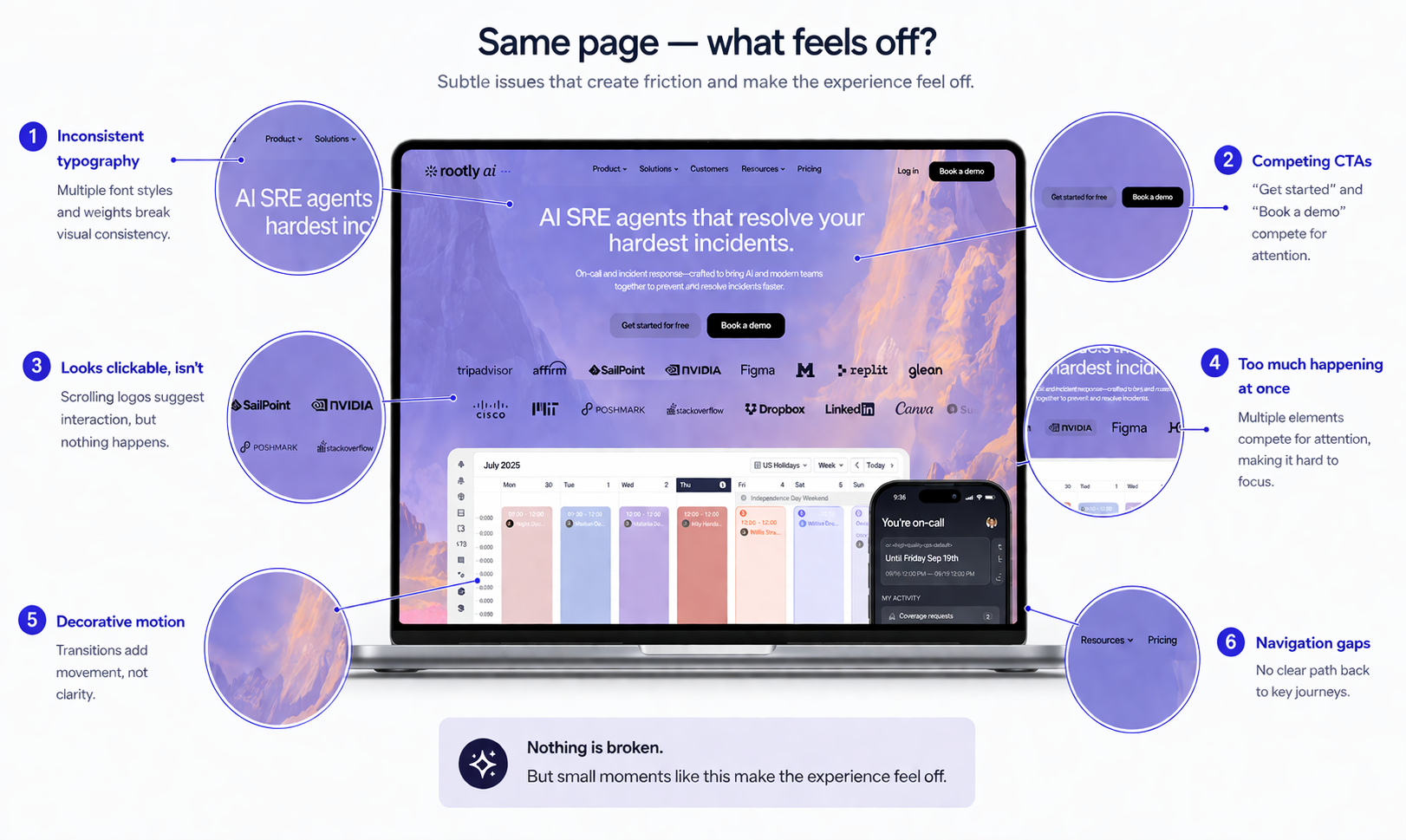

AI-generated products don’t usually fail in obvious ways. You’re not dealing with missing buttons or broken flows. Instead, you get something subtler. The structure looks right, but the hierarchy isn’t clear. The content sounds polished, but doesn’t really say anything meaningful. Calls to action are technically correct, but compete with each other.

Individually, none of these are major issues. Together, they create hesitation. And hesitation is what impacts conversion, engagement, and trust.

What “looks fine” often hides

When you look a little closer, these experiences start to reveal small points of friction that add up quickly:

- Navigation that gives too many equal choices

- Value propositions that sound good but don’t differentiate

- Layouts that don’t guide the eye or decision-making

- Multiple CTAs competing for attention

- Trust signals that feel generic rather than reassuring

This is the kind of thing you start to notice when you look a bit closer. Nothing here is technically broken, but the experience isn’t as clear or intuitive as it first appears.

Why this is harder to catch than traditional issues

This is where things get interesting! Traditional QA, automation, and even AI-based testing are designed to check whether something works. They validate functionality, performance, and expected outcomes. But they don’t tell you how something feels to use.

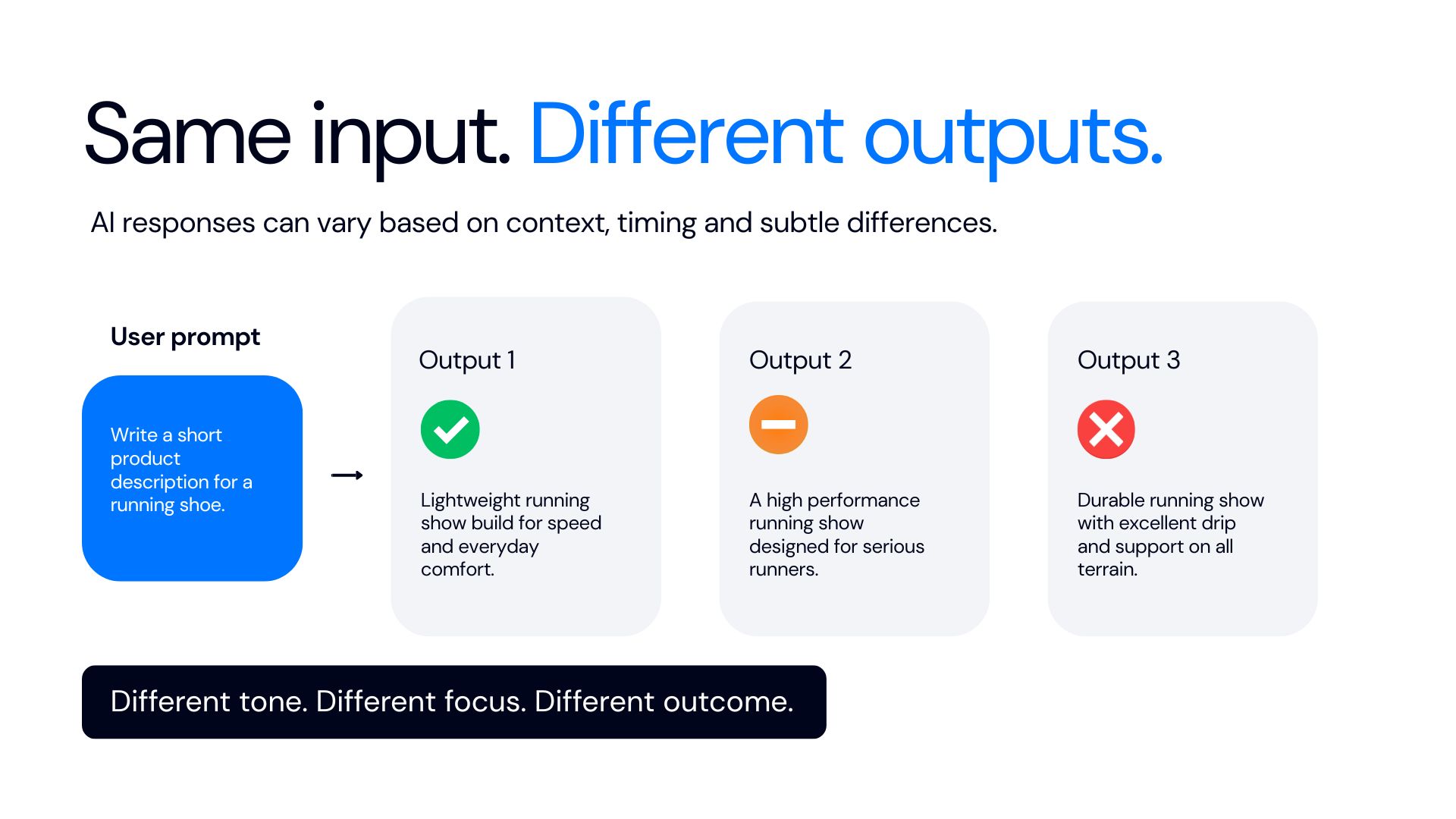

AI makes this harder, because the output isn’t always consistent. The same prompt, the same intent, can produce slightly different structures, wording, or behaviours depending on context. Which means even if something looks fine once, it doesn’t guarantee it will behave that way every time.

So the challenge isn’t just testing whether something works. It’s understanding how it actually behaves when real people use it.

Where real users change the picture

The moment you put a product in front of real users, you start to see what actually matters.

People don’t follow ideal journeys. They don’t read things in the order you expect. They bring assumptions, habits, and distractions with them. That’s when the gaps show up. You see where people hesitate, where they misinterpret something, or where they abandon a journey entirely.

Not because something is broken, but because it doesn’t quite make sense.

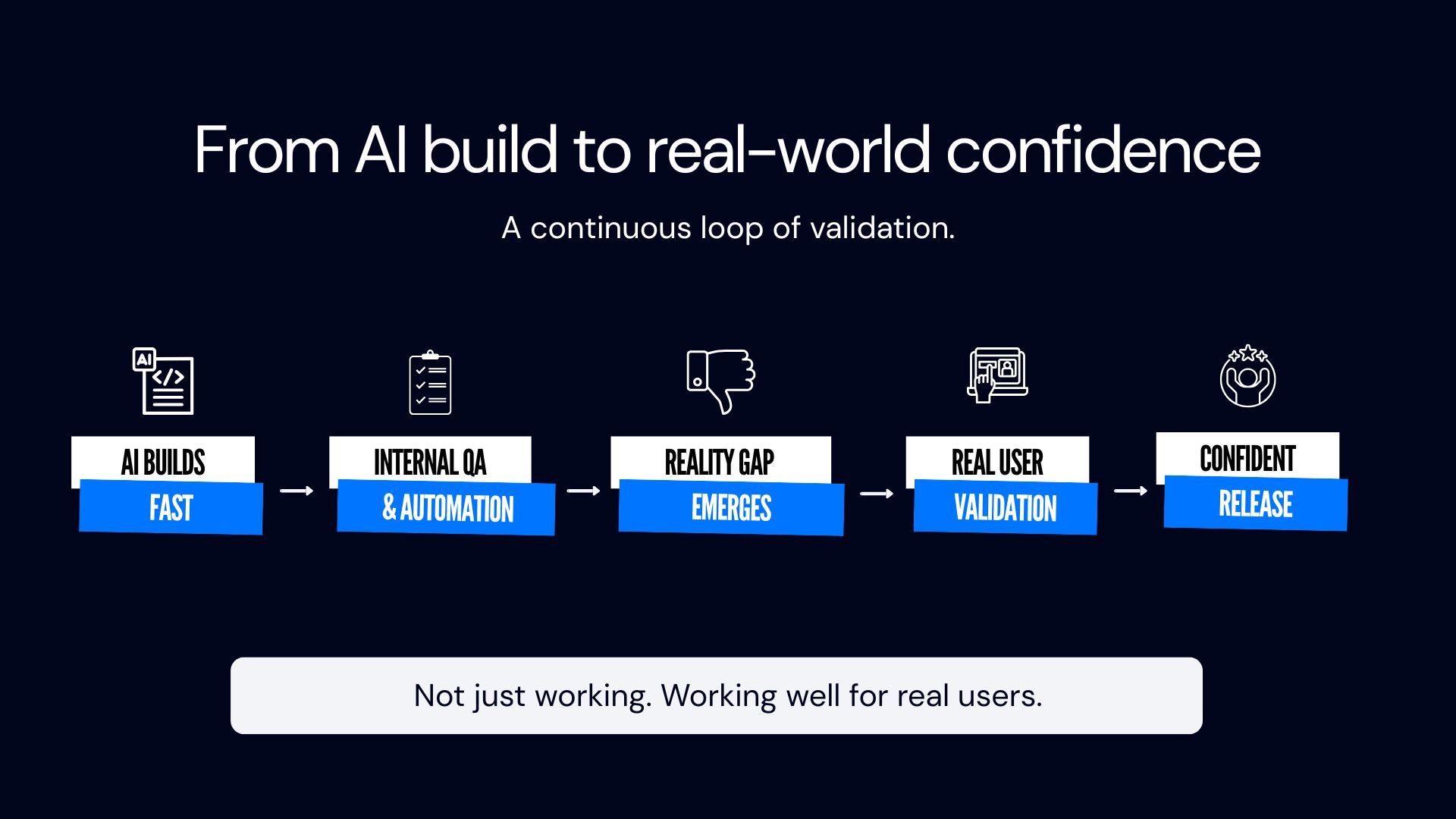

What most teams are now realising is that faster build cycles haven’t removed risk. They’ve just shifted it. The bottleneck is no longer development speed. It’s understanding how the product behaves in reality before it goes live.

This is where a validation layer becomes critical. Not another tool, not more automation, but a way of seeing how real people interact with what you’ve built, at the same pace you’re releasing it.

What this looks like in practice

Instead of relying purely on internal checks, teams are starting to introduce real user validation as part of their release cycle. That means:

- Putting journeys in front of real people before launch

- Understanding which issues actually impact users

- Prioritising based on behaviour, not assumptions

- Feeding that insight back into rapid iteration cycles

It’s not about slowing things down. It’s about making sure what you release actually works in the way users need it to.

Why this matters commercially

This isn’t just a UX discussion. When AI-generated experiences miss the mark, the impact shows up quickly:

- Lower conversion rates

- Increased drop-off in key journeys

- Reduced trust in the brand

- More reactive fixes after launch

The cost isn’t in building the product anymore. It’s in fixing it after users have already experienced it.

What hasn’t changed is the need to understand the people using what you build. If anything, that’s become more important. Because when everything looks like it should work, the only way to know if it actually does is to see it through real users’ eyes.

If you want to understand how your AI-built product actually performs in the real world, you can see how we approach it here: 👉JourneyEval AI