Mobile device and app usage

There are 5.1 billion active internet users in the world. Of which, 93% access the internet using mobile devices. 60.82% of worldwide online visits come from Mobile and tablet with 39.18% from desktops. This trend towards increased mobile device usage looks like it will steadily continue over the coming years.

In the not too distant past, companies were concentrating on making their websites mobile friendly, but now company mobile applications are becoming the norm and the benefits are substantial. However, there are risks associated with applications which aren’t as impactful as those for a website.

As part of this eBook we surveyed 150 people and we have used the related findings to support some of the sections below. 80% of those surveyed are below the age of 40 and will likely form the demographics of most client users bases now and into the next 10 years.

By testing the app thoroughly during its development and live release you can ensure your app gets installed, remains installed and is used regularly by your customers. You want your app to be one of the 30 apps that live in the prime real estate of a smartphone’s home screen, and even more optimistically, one of the nine apps users launch every day.

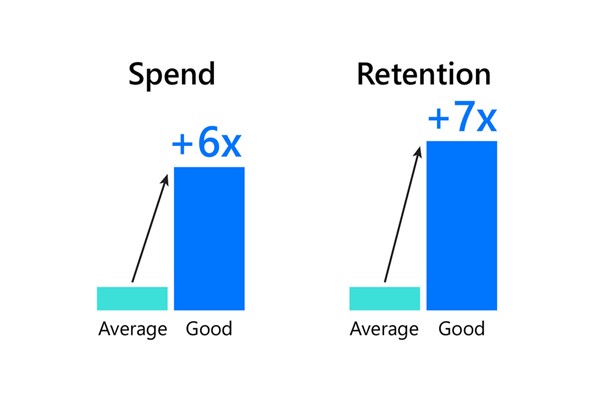

The following outlines the benefits of providing a quality app and the issues of having a poor quality app.

The benefits of a high quality mobile app

Sales growth

A new revenue channel that when successful can outperform other revenue channels. Feedback from Digivante clients indicate up to 40% increased conversion rates above website traffic.

Customer communication

Push notifications can be used to deliver promotions and discounts direct to the customer, encouraging them to convert.

Geolocation technologies can be used to notify customers of special offers in close proximity of your store. This can also be used to inform customers that you have a store nearby, if they approach a rival or related store.

Store mode

When customers enter your physical store your app can deliver an omnichannel experience of melding traditional bricks and mortar and digital operations. This is referred to as Store Mode and can includes services such as:

- Indoor navigation via Bluetooth beacons

- Interactive mapping

- Geofencing to deliver unique marketing messages based on their location instore

- Scanning of products to check stock levels

- Help requests to instore assistant

- Purchase completion without going to a checkout.

Customer loyalty programs

A loyalty program increases the chances of having a repeat customer base. Giving app only offers, tracking regular logins, free items when a number of purchases are complete and one day offers all encourage customers to return to your app. Push notifications to remind customers of these offers, proximity to completing or losing an offer, all play into customer loyalty.

Direct marketing

A loyalty program increases the chances of having a repeat customer base. Giving app only offers, tracking regular logins, free items when a number of purchases are complete and one day offers all encourage customers to return to your app. Push notifications to remind customers of these offers, proximity to completing or losing an offer, all play into customer loyalty.

Constant reminder

If your customer loves your app, it will likely be saved on the home screen page of their phone. Every time they access their phone it’s a constant reminder and your brand is at the forefront of your customers phone usage journey. In the UK and USA, on average people spend between 3 and 4 hours per day on their mobile devices. That’s a lot of time for your brand to be visible to your end customer via your app.

Always with the customer

If your customer loves your app, it will likely be saved on the home screen page of their phone. Every time they access their phone it’s a constant reminder and your brand is at the forefront of your customers phone usage journey. In the UK and USA, on average people spend between 3 and 4 hours per day on their mobile devices. That’s a lot of time for your brand to be visible to your end customer via your app.

App is mobile friendly

As an app is developed to be used on a mobile from its inception, it will likely be more mobile friendly than a website that has been optimized for mobile usage. Therefore, the app will be focused on mobile user journeys and provide a better user experience.

67% of those surveyed highlighted this as a reason to use an app rather than a website on mobile.

Trust

An app is a standalone installation that has to pass through multiple quality gates and checks before it is available via the Google or Apple Stores. 57% of users surveyed said they would trust using an app over using a website.

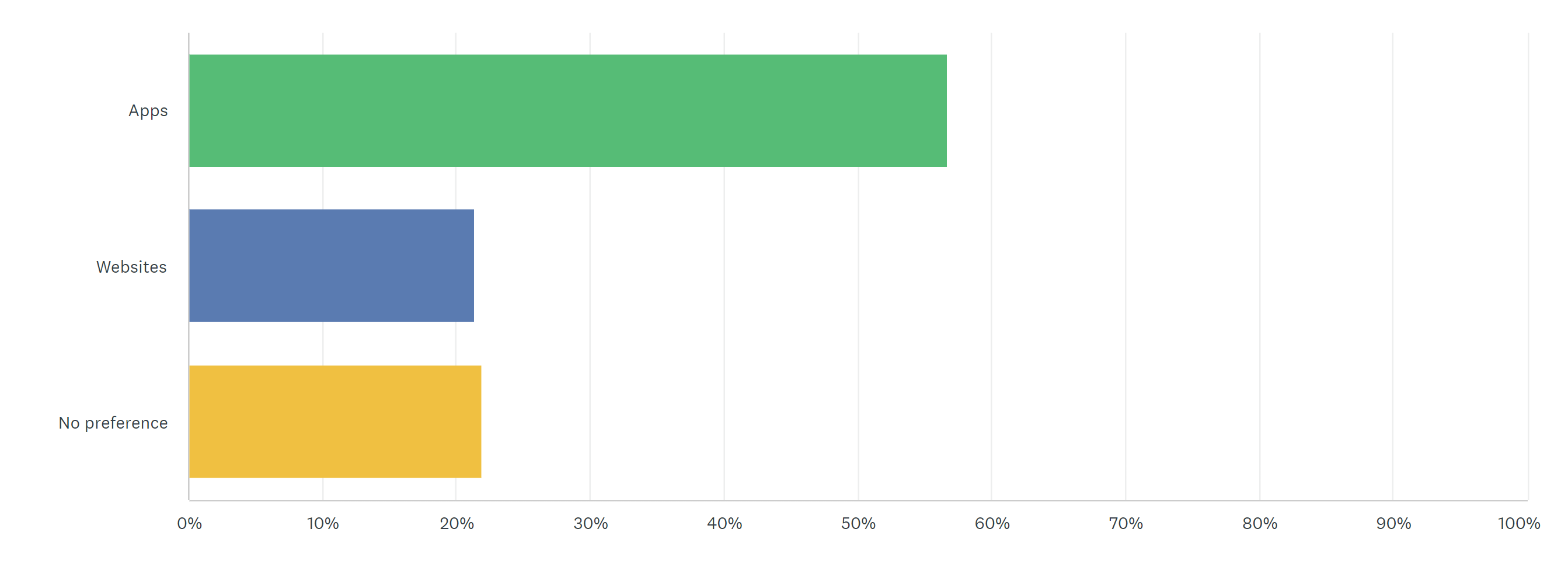

When using your smartphone, which of the following do you generally trust more, apps or websites?

Analytics

An app can have its own tracking and monitoring built in which can provide invaluable data in relation to customer buying habits, geolocation. You can use this information to provide customers with unique offers which relate directly to them. Unique customer centric contact is the future of increasing customer conversion rates. This data can help you achieve that.

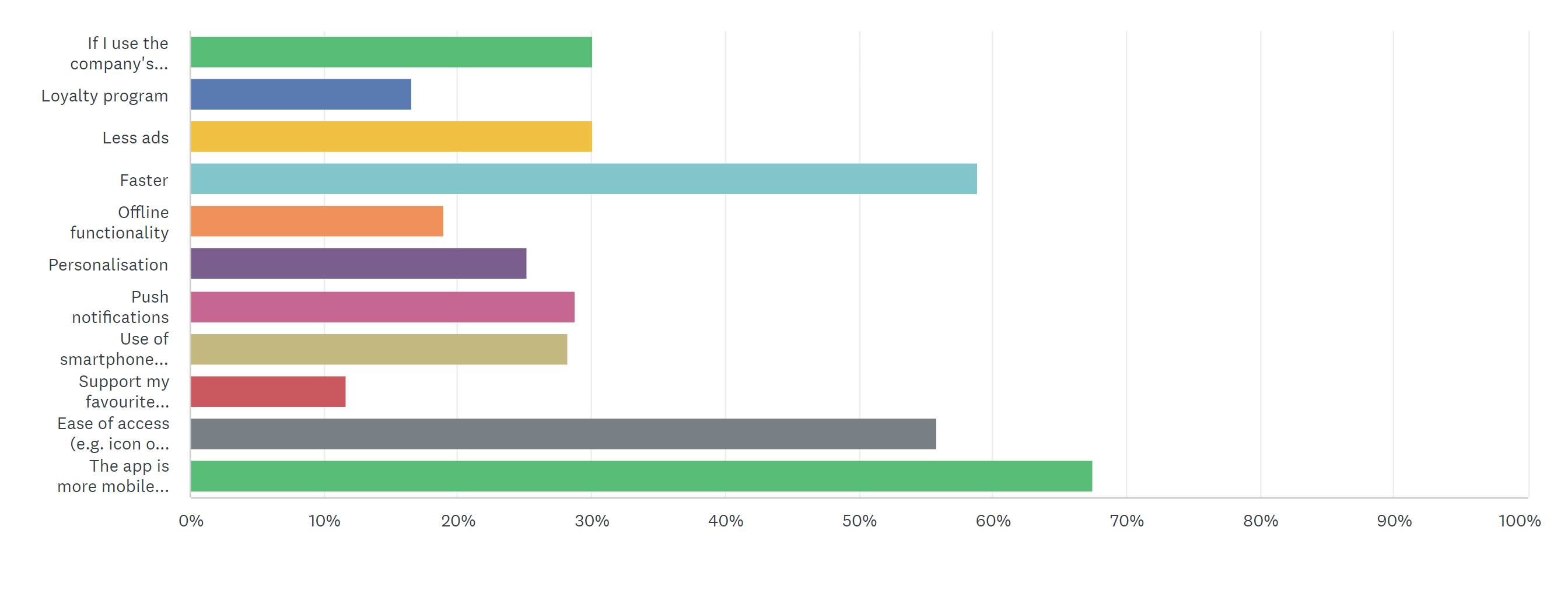

Survey results – benefits of an app over a website on mobile

Our survey indicates that the top 3 reasons for using an app instead of a website are:

- Faster performance

- Ease of access (icons, touch screen etc).

- Mobile friendly

These are all areas that hinder websites on mobile devices and are often under tested.

Which of these benefits would convince you to use a company’s app rather than their website?

The issues with having a poor quality mobile app

Bad reviews

The Google and Apple store provide users with the ability to quickly post reviews which have a direct influence on other users installing the app or not.

Our survey found:

- 68% would not install an app if it’s rating was under 3 stars

- 77% would not install if the 10 most recent reviews were mainly negative

- 10% would not install if the 3 most recent reviews were negative (Generally a max of 3 are shown on screen when scrolling to Ratings and reviews).

App reviews can be published very quickly and emotively meaning the reviews are worse than they would be if more thought went into them. Often people will post 5 stars for an excellent experience or 1 star for a really poor experience, there is often very little middle ground for people to be motivated to provide a review.

Unused app

Mobile phones and tablets now inform users when apps are unused after a period of time. This encourages the user to either hibernate the app which stops any further notifications or the user will uninstall the app.

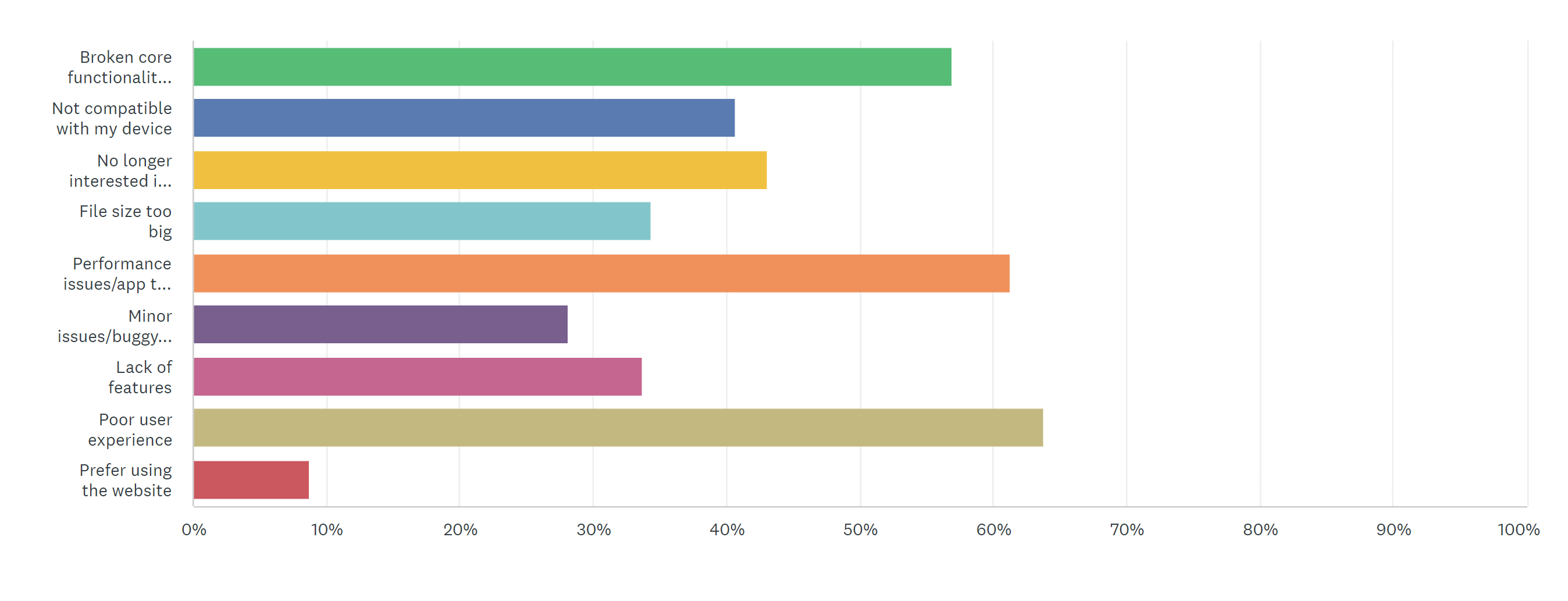

Uninstallation of app

28% of apps are uninstalled after 30 days regardless of the quality of the app and usually relates to it not being used or only being used for a specific task. If the app performed badly then there is a bias to never install again, first impressions have a big impact.

We asked the following, and the results show that users will uninstall an app with 1 week if an issue hasn’t been fixed so the urgency to find and fix issues is critical.

If an app you used had a bad patch/update that broke core functionality, how long would you give the developers to fix it before uninstalling it?

The 3 most common reasons for uninstalling are:

- Broken core functionality

- Performance issues

- Poor user experience.

These are all issues that could be avoided prior to a release of a new version.

Which of the following options would most likely convince you to uninstall an app?

A negative brand impact

If a customer has gone to the effort to install an app, they are clearly interested in your brand and want to be a regular user. But if they have a negative experience when using an app this can harm the customers trust in the brand and may put them off other channels in the future.

Directing customers from website to mobile app

Many companies are now forcing customers towards an app and away from their website to gain the benefits which have been documented above. As a customer, if you are being directed to the app rather than the known experience of the website journeys, they expect similar journeys and functionality in the app. If the app doesn’t work or is less feature rich as the website, this can be very frustrating, and you may lose the customer entirely.

Not compatible with user devices

Delivering an app that is not compatible with your users devices will lose users and also frustrate them. If you upgrade the app and existing users find the app no longer works or performs worse than before then again this will lead to loss of users and frustration.

What is mobile app testing?

Mobile app testing involves testing an app launch, major updates, minor patches or content changes. Each of these come with their own risks and issues and testing aims to mitigate these during the development cycle or if needed in live by minimizing customer impact as soon as possible.

Mobile app developer guidelines

A mobile app has to be built and made available in the Google Play Store and/ or Apple Store. Both provide guidelines for ensuring your app meets their requirement. The guidelines are detailed and can be found via the links below.

- Apple App Store Guidelines – App Store – Apple Developer

- Google Play Store – Developer Policy Center (google.com)

Mobile app test stores

Both Apple and Google provide test environments to allow apps to be uploaded and tested prior to go live. Uploading apps either to a test team or restricted customers is the best way to ensure the app is working as expected prior to a live launch. These are the testing portals which can be used.

- Apple Testflight – TestFlight – Apple

- Google Play Console – Open testing | Google Play Console

“Mobile application testing involves testing an app launch, major updates, minor patches or content changes.”

The challenges of mobile app testing

An app is a standalone installation rather than website that can be simply navigated to via a browser. Therefore application testing has some unique challenges beyond website testing, which is detailed here in the complete guide to website testing.

Mobile application install

When installing an app you need to consider how much space it requires, and ensure end users are aware before they install.

Does the app install correctly for all devices that are considered in scope. Often companies will state the app works on as low an OS version as possible to achieve a wider user base, but often don’t test on the lower end of the OS scale to ensure an app installs without any issues.

If the app fails to install correctly, what behaviours can be expected i.e. error codes, phone crashes etc. If you know what the possible failures are, then FAQs can guide the user to a successful outcome.

Battery usage

How much battery does an actively running or running in background app use. With so many apps vying for a user’s attention, power hungry apps are often uninstalled. Testing an app over a period of time to assess battery usage and regular monitoring ensures your app performs at acceptable levels for an end user.

Getting real user feedback

The approach to receiving and managing user feedback is very important. Although you can setup an app in a test environment so it’s available to a limited number of users in order to get feedback prior to live, it can be difficult to coordinate results or get users to proactively test the app for you. If the test version of the app causes crashes or impacts the users device they will be concerned about the quality. End users are not testers and therefore, even during testing, have an expectation of quality. If you launch an app in live to a limited number of customers, if they give feedback via reviews in the stores, this can have a negative impact on user installations when rolled out to a wider audience. Although soft launches can have a benefit, they can have a very negative impact on a release if not managed correctly. Any soft launch like this should have expectations, both positive and negative, documented so the senior management team understand what the results may look like i.e. good to go live or release will need to be delayed and more testing is required.

Cross-device and cross-platform testing

There are 1000s of different screen sizes, operating systems and launcher options available to customers. A user quickly becomes a SME of that device and understands how it behaves. Therefore replicating all of these expert users and understanding their behaviours can be very difficult.

Language coverage

If your app is going to be used in multiple countries then you need to be able to test different languages and the impact that has on content. Whether the language reads from left to right or right to left or words are shorter/ longer.

Regression testing

Regression testing functionality to ensure existing functionality doesn’t break or deteriorate over time with the constant requirement for new features. Managing both new feature testing and regression is often difficult. Regression packs are often neglected and no longer accommodate changes or runs consistently.

The importance of early detection

Fixing defects late on in your development process is a very expensive and complex process

Why? Let’s look at the lifecycle of a defect from identification to fix when it’s in production.

If a defect is reported by a customer, that customer must report it to a call centre. The call centre operator takes down the necessary details and then it is sent to their manager. The defect is passed to the tech department, where a developer works on it. But the developer often cannot reproduce the defect in their test environment. So, it goes all the way back to the source and the cycle starts again.

What’s more, an undiagnosed defect in your live app may cause ongoing instability and you could lose customers without understanding the root cause. Any such defects could also cause a domino effect, where you fix one thing only to unleash a raft of new defects.

So what can you do?

You could introduce a regular code reviewing process to help with quality assurance and optimise your coding cycle from the outset. However, to truly mitigate the impact of defects in your app, you need to implement app testing as early as possible into your development lifecycle.

Common mobile application testing methods

There are a number of common testing methods used to ensure an app meets the requirements of the end users:

A crowd of global testers can be used to deliver high levels of device coverage and speed of execution. Using a portal allows for all testing and results to be delivered to the client.

Testers, either internal or outsourced, can use personal or company supplied devices to test new features, regression and exploratory test. Often limited in device coverage with scope reduced to meet deadlines as execution can be slow.

Emulators are often most useful during development or to provide limited coverage during testing.

Emulators are limited to what you select and setup and require an expert to use the tool properly. The tester is also acting as a user. If you are an Apple user, Android will be very alien to you and vice versa.

Automation testing for applications isn’t as defined or commonly understood as website or software application testing on desktops. Automated testing during development is a must but in later stages of testing it is still very much a new area. It’s very complex and takes time to get right. Coverage is hard gained and costly so it best practice to automate user journeys that are used most frequently.

How to decide on the best mobile application testing strategy for your business

Assess all the testing methods available. Do not write off any options too early as usually the best approach is to apply a combination of methods. Each method may provide different benefits at different phases of your software development lifecycle so be open to this.

Each phase will have different problems so choosing the right solution is what’s important. If the right solution can’t be implemented due to budget then apply the next most suitable option. Having no solution is a huge risk which leaves you vulnerable to the issues of having a poor mobile app.

How to get started with mobile application testing

Most companies start by carrying out testing internally using personal devices and then move to have a set of company owned devices. This is a good start point as it shows that it is being considered and some level of testing is being carried. This approach isn’t scalable and often sees devices became redundant within 2 years of purchase so it’s worth considering alternatives as early as possible. If you don’t test enough then the damage caused can be hard to recover from.

Do some research into emulators and crowd testing solutions, there are always people to talk to and provide feedback or guidance for you on your mobile application testing journey.

As you reach out to different website testing companies, find out what services they offer, request testimonials and examples of work from previous clients, and ask for comparable quotes on specific projects.

The best website testing companies will provide you with a quality service for many years and will be recognised by their clients as proven QA professionals.

If you’re interested in exploring a project with an outsourced QA and testing company, get in touch.