Despite the push to ‘budget dump’ or face the year-end “use it or lose it” backlash, it is pertinent to think strategically about your organisations future objectives before moving forward. And with the end of the financial year in sight, now is a great time to review your current processes and providers. Doing this means that you can effectively identify gaps in your software development strategy which will put you on the best path for the next year.

If some of your objectives are improving conversions, better customer satisfaction or even adding new markets, it would be wise to allocate a portion of your budget to additional testing. Investing in a testing service provider that makes your job easier is one of the smarter ways to spend your remaining budget before the year concludes. So, here are a few QA ways that your budget can be best utilised:

1. Complete an accessibility audit

7 in 10 disabled customers report leaving websites that they find hard to use and an estimated £17 billion+ is lost from websites that do not support those with disabilities. The UK goal is to achieve WCAG 2.2 guidelines which are the standards and this includes a set of programmatic structure and site content. Completing an accessibility audit, then issues are resolved, is a quick way to bump up your conversion rate, yet many companies fail to consider this valuable market and whilst it is tricky to provide statistics on the exact benefits, it is estimated that 7-10% of users find an accessible site easier to use and hence they are more likely to convert on a site that meets their needs.

2. Bring in fresh eyes

With perhaps a limited internal team who have spent the whole year looking at your site to ensure it works, unfortunately it is likely that the team are ‘used’ to how the site looks and works. They are therefore unlikely to find the issues that customers will face who have less of a familiarisation with the site.

Now is a good time to do a full site test in an exhaustive way, to identify if there are any issues that exist on the site or app. By combining fresh eyes and a wide range of mobile and web platforms to test your site or app will ensure that some of those areas that may have been overlooked will be spotted.

Fixing these issues is likely to yield an improvement in conversions. This comment was provided after a recent test:

“We saw a 16% revenue increase within the first two weeks and a significantly improved profit margin.”

Keith Corrigan, Ecommerce Project Manager, Simon Jersey

3. Build and execute a regression pack

One of the most important things that can be done to improve UAT quality is to build a reliable, high coverage set of regression test scripts that cover the core customer journeys. It is also important to engage with an organization capable of executing these through the year as well as maintaining them to ensure that new functionality is added.

Specsavers endorse this route when considering how they manage their regression for their website.

“We have dramatically increased test coverage for the deliverables making it to production. In doing so, we’ve achieved an unprecedented level of acceptance. We would never, ever have been able to achieve this with internal resources alone.”

User Acceptance Test Manager, Specsavers

This frees up the internal team to focus on new areas of functionality in more detail, so they won’t get bogged down with the more mundane and repetitive task of re-running each regression pack. Furthermore, the management will be confident from the fact the regression pack has been executed thoroughly and across a range of different platforms to ensure that it meets quality expectations. In turn, this will ensure the smoothest possible experience for customers and curbs drop in conversion rates.

4. Performance testing

We all know that the slowness in websites and apps annoy users. Still, only a few companies bother to check the actual customer experience on a range of different environments and networks but focusing only on the server capability. Whilst the server capability is an important metric that should be regularly checked at on, and above peak requirements, failing to understand the real-user experience, especially for internationalized experiences can lead to big thumbs down from customers.

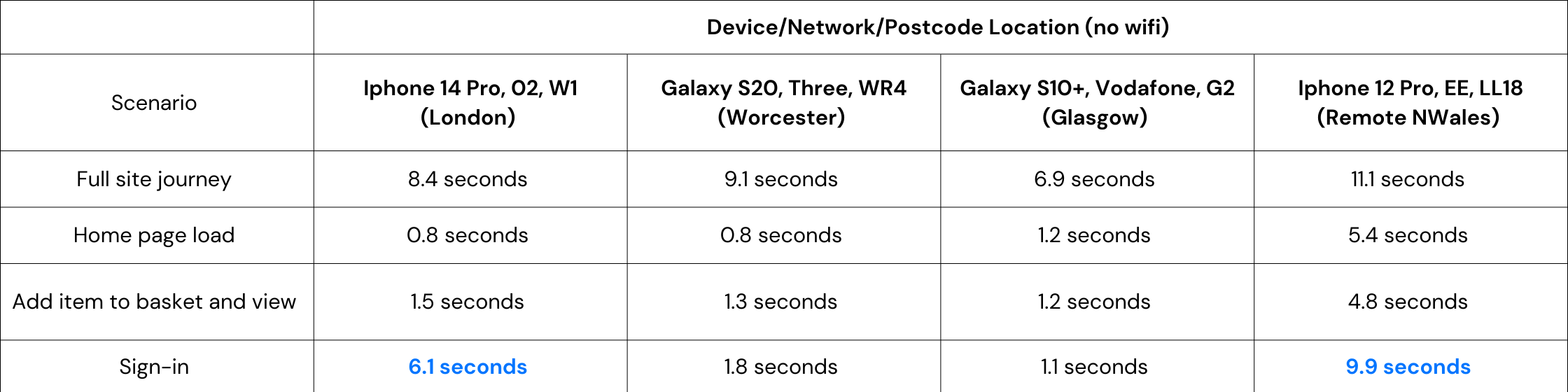

A comprehensive review will include not only the peak performance tests, but also a matrix of common devices and networks in the target countries. This might look like the following (assuming the digital experience is used in the UK):

As an example of a sample test (a real one would have a wider set of results), it is possible to see that there is an issue with iPhone on sign-in pages (as highlighted) and that the remote location in Wales sees a specific drop in performance.

5. Remote usability testing

It is estimated that just a 1% improvement in usability will create a 2% increase in conversion rates.

With the focus on delivering more features and capability as quickly as possible, it can be easy to forget that the focus is on the customer, but how do you really get an understanding of what the customers think?

Remote usability testing is the answer. If you haven’t come across this before, as opposed to standard usability testing where people are assembled into a ‘lab’, remote usability testing allows ‘real-users’ to talk through their experience on your website or app in the comfort of their own home and on their own device without the pressures of ‘giving nice feedback’.

The results are then compiled by a usability specialist, into actionable improvements with evidence so that the site can be improved and made more suitable for the key users.

Users can be pulled from many different demographics, whether it be male users over the age of 70 in the South West, or female iPad users in London with an interest in cooking and who are over the age of 55.

Incorporating key user feedback will ensure that the site or app works in an optimum way for the target users and helps you see great improvements in conversion rate. It is estimated that the conversion rate can be improved by as much as 200%.

Conclusion

With the right investment in the right testing service provider, you may have more than a budget increase to look forward to next year. So now is the best time to use any leftover budget to trial new approaches such as Digivante Crowdtesting services which can either be delivered as one-off projects or as part of an ongoing partnership.

Why choose Digivante?

Digivante provide a range of software testing services that support clients to boost their conversion rates as outlined above. Proven with large enterprise, yet agile enough to manage all clients, Digivante is unique in providing an ‘at-scale’ crowdsourced solution utilising our community of over 50,000 professional testers in over 150 countries.

To speak to us about investing in testing, get in touch today.